Neurons, weights and biases with analogies

April 9, 2026

A neuron does not “understand” digits, faces, or words. It just takes in numbers, combines them, and produces a new number. That is the job of neurons in a neural network.

They turn raw inputs into signals the next layer can use. When you stack many neurons across layers, those simple signals can add up to surprisingly powerful behaviour.

The goal of this post is simple: To help you build a clear, intuitive picture of what a neuron is, what weights do, and what a bias does, using two everyday analogies.

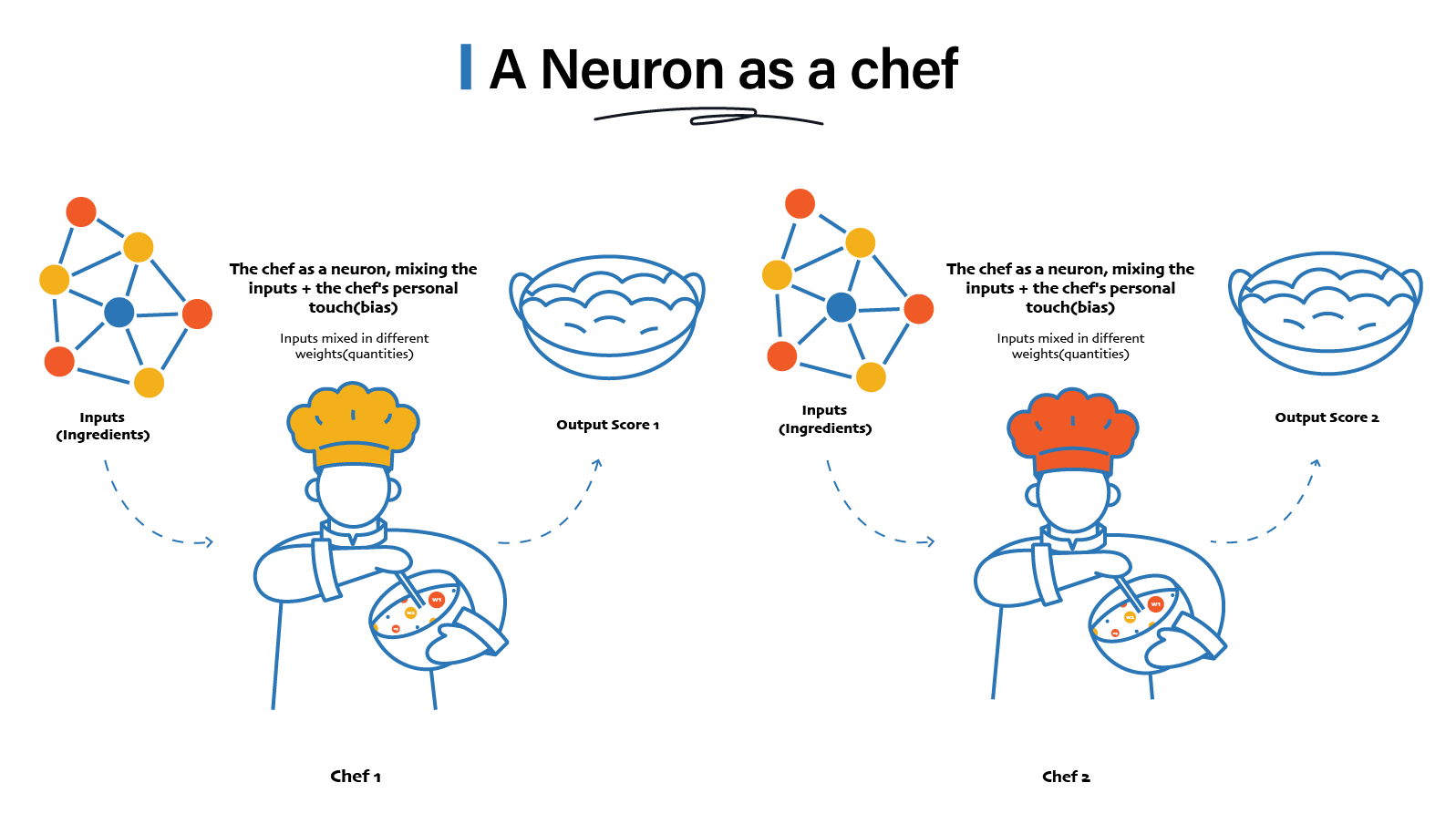

A neuron as a chef

Think of a neuron as a chef in a restaurant.

- Ingredients come in. These are the inputs.

- The chef mixes them in specific quantities. These are the weights.

- Then the chef adds a personal touch. That is the bias.

Two chefs can receive the same ingredients but produce different meals. Because they use different quantities and different personal touch.

That is exactly what is happening in a neural network.

- Each neuron receives numbers.

- Each neuron mixes them differently.

- Each neuron produces its own output score.

Now let’s talk about those “quantities”, the weights and that “personal touch”, the bias.

Weights: A non-technical view

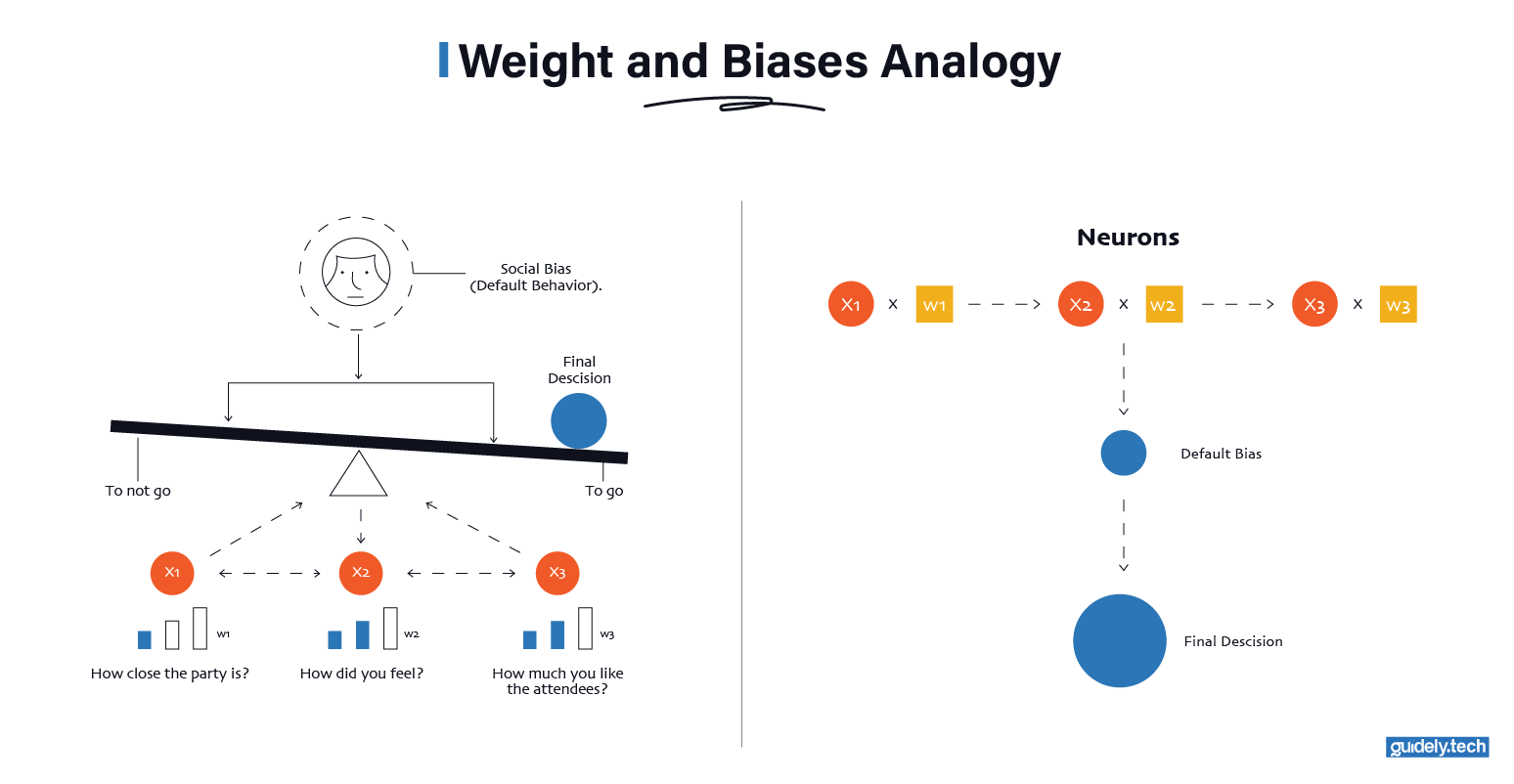

Suppose you are deciding whether to attend a party and your decision depends on three factors:

- : How close the party is.

- : How tired you are.

- : How much you like the people attending.

You do not weigh these equally.

- Distance might matter a little.

- Tiredness might matter a lot.

- Friends might matter even more.

Each factor pushes your decision in one direction or the other, with different strengths. Those “strengths” are weights. You might mentally combine them like this:

- A nearby party nudges you toward going.

- Being very tired pulls you strongly toward staying home.

- Close friends pull you back toward going.

You do not consciously multiply numbers. But you are doing something similar. You are combining signals. Each signal has a different importance. You end up with one internal score: “Do I go or not?”

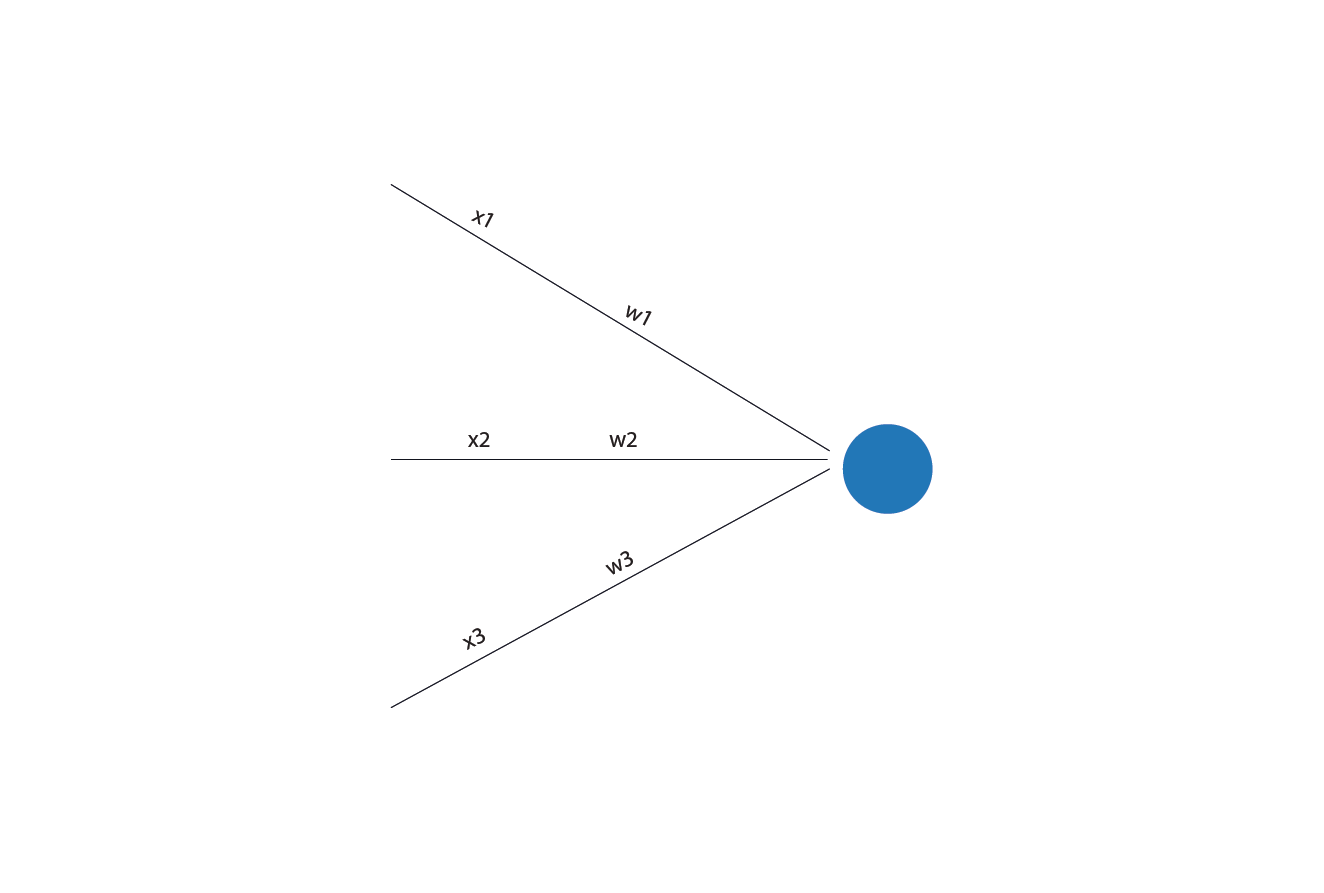

A neuron does the same thing, but more mathematically.

- Each input number is multiplied by its weight.

- All those weighted inputs are summed.

- The result is a single score that reflects how strong the overall evidence is.

If the evidence points strongly in one direction, the neuron responds strongly. If the evidence is weak or conflicting, the neuron barely responds.

So when we say “this neuron cares more about certain inputs”, we mean something very literal: Those inputs have larger weights, so they contribute more to the neuron’s final score.

Bias: the baseline tendency

There is one more piece before the neuron makes a decision: the bias.

The bias is a built-in offset. It controls how easily or how hard a neuron responds. When a neuron responds strongly enough to pass a meaningful signal forward, we say the neuron activates (or fires). These two words mean the same thing, and from this point on, we will mostly use the term activation.

Even before looking at the inputs, the neuron starts with a small push toward either activation or silence. A positive bias makes activation easier. The neuron needs less evidence from its inputs to activate. A negative bias makes activation harder. The inputs must collectively be stronger before the neuron responds.

In simple terms, the bias sets the neuron’s default tendency. It decides whether the neuron is eager to activate or cautious, even before any inputs are considered.

The bias is usually added to the weighted sum. Mathematically, the neuron computes:

Where is the bias.

But why is the bias needed? Because sometimes, even if all inputs are zero or small, you still want the neuron to have a baseline tendency to activate.

Back to the party example. Even if the party is not close, you are somewhat tired, and the guest list is unknown, you might still go. Because you are generally social. That “default tendency” is the bias. In other words, some people are naturally social. Even with weak reasons, they lean toward going. Others need strong reasons to say yes. That baseline inclination is the bias.

Your final decision comes from combining the factors, weighting them, adding your baseline tendency, and seeing where you land. A neuron does the same thing, just with numbers.

Bringing it all together

The chef analogy and the party analogy are describing the same underlying idea.

A neuron takes in inputs, weighs how much each one matters, adds a baseline push, and produces an output score. Many neurons doing this in layers is how a neural network turns raw numbers into a useful prediction.

If you want the full story, including how layers work together and how these weights and biases are learned during training, read our detailed neural networks guide on Guidely.

Recommended reading

Skip to

Enjoyed the read? Help us spread the word — say something nice!